AI Tools for Students: The Real Method to Build Projects

- May 5, 2026

- Prachi Gupta

- AI Guides

I wanted to build something useful. A daily routine planner—something I could actually use to organise my schedule, track meals, manage study time, log habits, and review my week. Real productivity tool, not a template. Something structured and complete.

Table of Contents

ToggleI opened ChatGPT and asked: “Create a daily routine planner in Excel for me.” It gave me a blank file. No tabs, no structure, no headers. Useless. I was frustrated. If AI could generate code and write essays, why couldn’t it build a functional spreadsheet? Then I realised: the problem wasn’t the tool. It was me. I’d asked vaguely and expected a specific result.

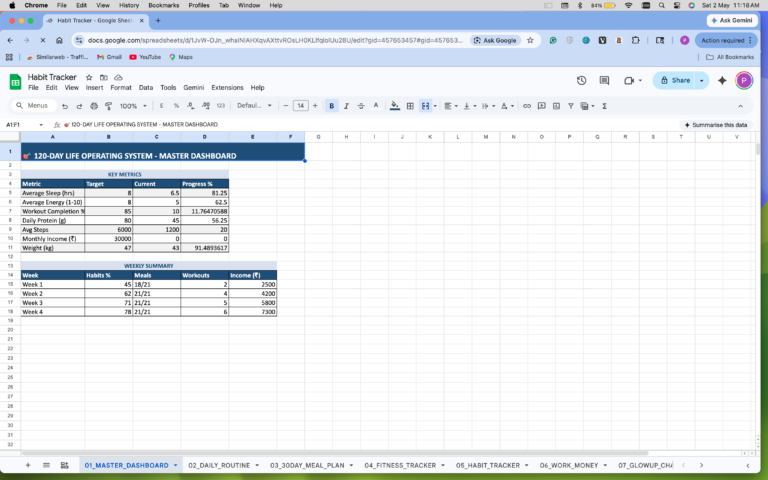

I switched to Claude. I rewrote the request with structure, detail, and clarity. Then I iterated. And iterated. And iterated again—about 5-10 times—until what I got was actually usable. The final result? A complete, multi-tab Excel workbook. Time management, meal planning, study tracking, habit logging, and weekly reviews. Everything is built in. Ready to use. But I almost shipped it broken. And that mistake taught me the real lesson about how students should actually learn AI tools.

Why “Just Ask AI” Doesn’t Work

The first mistake students make is treating AI like a search engine. You ask one question, you get one answer, you’re done. That doesn’t work for building projects.

When I said “create a daily planner,” I was vague. AI defaulted to the simplest possible output. A blank template. Technically correct, completely useless.

The second mistake is assuming the output is correct without checking it. I almost submitted my planner with blank links inside—literal empty cells where data should have been. My teacher caught it. Would have been embarrassing if she hadn’t.

These two mistakes—vague prompting and no verification—are why students think AI can’t handle real work. It’s not that AI can’t. It’s that students aren’t prompting it correctly.

Read More: Best Free AI Design Tools 2026 That Actually Work

The Specific Prompt That Changed Everything

When I switched to Claude, I didn’t just ask for a planner. I got specific.

Here’s what I actually wrote (simplified):

“Create a detailed, well-structured Excel workbook for a Complete Daily Routine Planner. The workbook must contain 5–10 separate tabs, each with a clear purpose. Design it so a student can track their entire day, including time management, meals, productivity, and habits.

Each tab should have clear column headers, structured rows, example entries filled in, and clean formatting. Include practical time slots. TAB 1: Daily Schedule with Time Slot (5 AM – 11 PM), Activity, Priority (High/Medium/Low), Notes, Completed (Yes/No). TAB 2: Meal Planner with Time, Meal Type, Food Items, Calories, Protein/Carbs/Fats. TAB 3: Grocery Planner with Item Name, Category, Quantity, and Bought status. TAB 4: Study Planner with Subject, Topic, Study Time, Difficulty, Status. TAB 5: Goals & Tasks. TAB 6: Habit Tracker. TAB 7: Health & Wellness. TAB 8: Expense Tracker. TAB 9: Weekly Review. Make everything practical and usable daily.”

Notice the difference? Not “create a planner.” Specific tabs. Specific columns. Specific examples. Specific purpose.

That’s the actual skill. Being specific about what you want.

The Iteration Process (It Takes Multiple Tries)

Claude’s first version was close. But not perfect. Some tabs were missing columns I needed. The formatting wasn’t clean enough to actually use. The example entries weren’t realistic. Dropdown options weren’t structured right. Some cells had formulas that didn’t work.

So I iterated. I’d review what Claude gave me, identify what was wrong, and prompt again with the fix: “The meal planner tab is missing protein/carbs/fats breakdown. Rebuild it with those columns, keep the time slots, make the examples more realistic.” Again. And again. About 5-10 iterations total.

That’s normal. That’s not a failure of AI. That’s how you actually use it. Most students expect one-shot perfection. That’s not how this works. You build something iteratively. You review it. You adjust. You ask for improvements. You get closer to what you actually want.

By iteration 7 or 8, I had something genuinely usable. Not perfect. But functional. Real.

Why Claude Beat ChatGPT for This

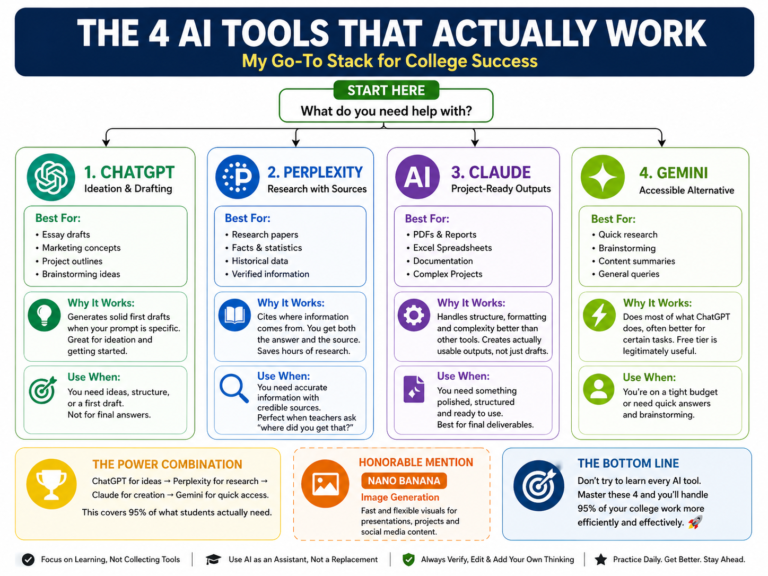

This is important: different tools are better for different things.

ChatGPT gave me blank files. When I asked for structured, formatted spreadsheets, it struggled. It’s good at text, ideas, and general writing. But it doesn’t handle complex Excel architecture well.

Claude handled the planner differently. It understood the structure. It built multiple interconnected tabs. It formatted columns consistently. When I asked for improvements, it refined the entire workbook, not just pieces.

For a complex, structured project like this—multiple tabs, interdependent data, specific formatting—Claude was significantly better.

This is the lesson students miss: tool selection matters. ChatGPT for brainstorming and drafting. Claude for structured projects and complex builds. Perplexity for research with sources. Different tools, different strengths.

Also Read: Best AI Tools for Coding: Claude vs ChatGPT

The Blank Links Mistake (Verification Catches Real Problems)

I had the final Excel file. It looked good. Formatted well. All tabs are functional. I was ready to submit it for a project.

I didn’t check it thoroughly. That was stupid.

My teacher reviewed the file in print, and the background of some cells appeared grey. When she printed it, she saw empty links—cells where I’d referenced data that didn’t exist. Blank formulas. Incomplete structure.

She pointed it out immediately. In front of me.

I’d trusted the AI without verifying. Even after building something real, I almost submitted broken work.

That’s the verification step nobody teaches. After AI builds something, you have to:

Check for errors – Run through the actual file. Do the formulas work? Are the references correct? Do the time slots actually align?

Test it – Use it. Put in sample data. Does it work the way you expected?

Review the output – Print it if applicable. See if it looks right on paper. Catch formatting issues.

Cross-check examples – Are the sample entries realistic? Do they demonstrate the tool correctly?

I did none of this. That’s why blank links slipped through.

The Real Difference: Trial-and-Error vs. Generic

Here’s what separates students who build quality projects from students who submit generic garbage:

Generic students ask vaguely, take whatever AI gives them, and submit it. They get average results.

I asked specifically, iterated multiple times, caught mistakes through verification, and built something actually usable. It took longer. But it was real work.

The difference isn’t smarter. It’s methodical. It’s refusing to accept the first output. It’s being specific about what you want. It’s testing before you submit.

That process—specificity, iteration, verification—applies to every AI project. Blog posts. Research papers. Code. Data analysis. Marketing copy. Whatever you’re building.

How Students Should Actually Use AI Tools

If you want to build real projects, not generic submissions:

Be specific in your prompt – Include what you need, how it should be structured, and what it should include. Don’t assume AI knows what’s in your head.

Iterate – First output is rarely final. Review it. Ask for improvements. Refine. Repeat. 5-10 iterations are normal and expected.

Use the right tool – Claude for structured projects, ChatGPT for brainstorming, Perplexity for research. Match the tool to the task.

Verify everything – Check it. Test it. Use it. Print it if needed. Catch errors before anyone else does.

Do the editing – AI gives you a starting point. You finish it. Remove generic lines. Add your thinking. Make it real.

This isn’t “just use ChatGPT and get results.” It’s trial-and-error, exploration, and building something that actually works. That’s how I went from frustrated with a blank Excel file to having a daily routine planner I actually use. That’s how you actually learn AI tools for real projects.

Read More: Best Free AI Design Tools 2026 That Actually Work